Most modern engineering disciplines are based on applied mathematics. An engineer or scientist observes a particular event and formulates a hypothesis (or conceptual model) which describes a relationship between the observed facts and the event being studied. In the physical sciences, conceptual models are, for the most part, mathematical in nature. Mathematical models represent an efficient, shorthand method of describing an event and the more significant factors which may cause, or affect, the occurrence of the event. Such models are useful to engineers since they provide the theoretical foundation for the development of an engineering discipline and a set of engineering design principles which can be applied to cause or prevent the occurrence of an event.

Mathematical models may be deterministic or probabilistic. An example of a deterministic model is Newton’s second law of mechanics, F = ma, force equals mass times acceleration. There is nothing indefinite about this model. A probabilistic model is one in which the results cannot be determined as exactly as in the deterministic model but can only be obtained in terms of a probability or probability distribution function. An example of a probabilistic model is modern atomic theory which defines the exact future location of an electron in terms of a probability function.

The disciplines of reliability and maintainability (R/M) are have commonly been based upon probabilistic or stochastic models. This is for several reasons:

It would be extremely difficult, uneconomical and probably nonproductive to identify and exactly quantify all of the variables which contribute to the failure of even simple electronic components in order to develop an exact, deterministic failure model. Thus, we are dealing with uncertainty and the measured values which can only be stated with less than total certainty.

Probabilistic models, when applied to large samples, tend to “smooth out” individual variations so that the final, average result is simple and accurate enough for engineering analysis and design.

Since R/M parameters are defined in probabilistic terms, probabilistic parameters such as random variables, density functions, and distribution functions are utilized in the development of R/M theory.

The Reliability Theory section of this blog describes some of the basic concepts, formulas, and simple examples of application of R/M theory which are required for better understanding of the underlying principles and design techniques presented in other sections.

Practicality rather than rigorous theoretical exposition is emphasized.

Reliability is defined in terms of probability, probabilistic parameters such as random variables, density functions, and distribution functions are utilized in the development of reliability theory. Reliability studies are concerned with both discrete and continuous random variables.

An example of a discrete variable is the number of failures in a given interval of time.

Examples of continuous random variables are the time from part installation to failure and the time between successive equipment failures.

The distinction between discrete and continuous variables (or functions) depends upon how the problem is treated and not necessarily on the basic physical or chemical processes involved. For example, in analyzing “one shot” systems such as missiles, one usually utilizes discrete functions such as the number of successes in “n” launches. However, whether or not a missile is successfully launched could be a function of its age, including time in storage, and could, therefore, be treated as a continuous function.

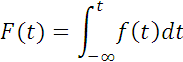

The cumulative distribution function F(t) is defined as the probability in a random trial that the random variable is not greater than t, or

where f(t) is the density function of the random variable, time to failure. This is termed the “unreliability function” when speaking of failure. It can be thought of as representing the probability of failure prior to some time t. If the random variable is discrete, the integral is replaced by a summation.

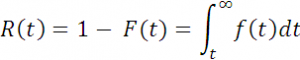

The reliability function, or the probability of a device not failing prior to some time t, is given by

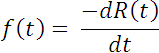

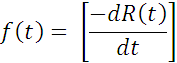

By differentiating Equation 2 it can be shown that

The probability of failure in a given time interval between t1 and t2 can be expressed by the reliability function

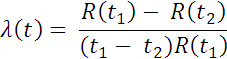

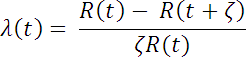

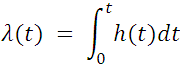

The rate at which failures occur in the interval t1 to t2, the failure rate λ(t), is defined as the ratio of probability that failure occurs in the interval, given that it has not occurred prior to t1, the start of the interval, divided by the interval length. Thus,

or the alternative form

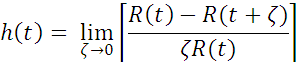

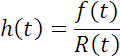

where t = t1 , and t2 = ζ. The hazard rate, h(t), or instantaneous failure rate is defined ss the limit of the failure rate as the interval length approaches zero, or

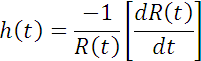

But it was previously shown (Equ. 3) that

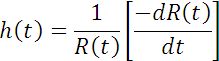

Substituting this into Equ. 8 we get;

This is one of the fundamental relationships in reliability analysis. For example, if one knows the density function of the time to failure, f(t), and the reliability function, R(t), the hazard rate function for any time, t, can be found. The relationship is fundamental and important because it is independent of the statistical distribution under consideration.

The differential equation of Equ. 7c tells us, then, that the hazard rate is nothing more than a measure of the change in survivor rate per unit change in time.

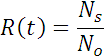

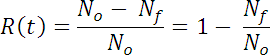

Perhaps some of these concepts can be seen more clearly by use of a more concrete example. Suppose that we start a test at time, to, with No devices. After some time t, Nf of the original devices will have failed, and Ns will have survived (No = Nf + Ns). The reliability, R(t), is given at any time t by:

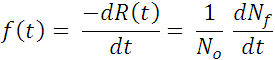

From equation 3

Thus, the failure density function represents the proportion of the original population, (NO), which fails in the interval (t, t + Δt).

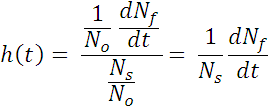

On the other hand, from equations 8, 9 and 11

Thus, h(t) is inversely proportional to the number of devices that survive to time t, (NS ), which fail in the interval (t, t + Δt).

Although, as can be seen by comparing equations 6 and 7a failure rate, λ(t), and hazard rate, h(t), are mathematically somewhat different, they are usually used synonymously in conventional reliability engineering practice.

Perhaps the simplest explanation of hazard and failure rate is made by analogy. Suppose a family takes an automobile trip of 200 miles and completes the trip in 4 hours. Their average rate was 50 mph, although they drove faster at some times and slower at other times. The rate at any given instant could have been determined by reading the speed indicated on the speedometer at that instant. The 50 mph is analogous to the failure rate and the speed at any point is analogous to the hazard rate.

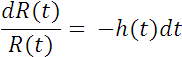

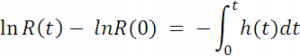

In equation 8, a general expression was derived for hazard (failure) rate. This can also be done for the reliability function, R(t). From equation 7

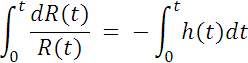

Integrating both sides of equation 13

but R(0) =1, ln R(0) = 0 and

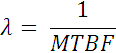

Equation 14 is the general expression for the reliability function. If h(t) can be considered a constant failure rate, λ , which is true for many cases for electronic equipment, equation 14 becomes

Equation 15 is used quite frequently in reliability analysis,

particularly for electronic equipment. However, the reliability analyst

should check that the constant failure rate assumption is valid

for the item being analyzed by performing goodness of fit tests on the time to failure data. One approach to check if the constant failure rate assumption is valid is to perform a Weibull analysis using a tool such as Reliability Analytics Toolkit Weibull Analysis tool. Given time-to-failure data, this tool performs a Weibull data analysis to determine the Weibull shape parameter (β) and characteristic life (η). If the Weibull shape parameter is found to be 1.0, or relatively close to 1.0, then the failure rate can be assumed to be constant and equation 15 can be used.

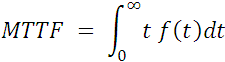

In addition to the concepts of f(t) h(t), λ(t), and R(t), previously developed, several other basic, commonly used reliability concepts require development. They are: mean time to failure (MTTF), mean life (Θ), and mean time between failure (MTBF).

Mean Time to Failure (MTTF)

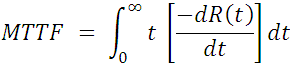

MTTF is nothing more than the expected value of time to failure and is derived from basic statistical theory as follows:

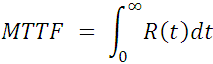

Integrating by parts and applying l’Hopital’s rule, we arrive at the expression

Equation 17, in many cases, permits the simplification of MTTF calculations. If one knows (or can model from the data) the reliability function, R(t), the MTTF can be obtained by direct integration of R(t). For repairable equipment MTTF is defined as the mean time to first failure. The Reliability Analytics Toolkit Active redundancy, with repair, tool can perform this calculation.

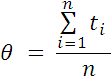

Mean Life (Θ)

The mean life (Θ) refers to the total population of items being considered. For example, given an initial population of n items, if all are operated until they fail, the mean life (Θ) is merely the arithmetic mean of the total population given by

where

ti = time to failure of each item in the population

n = total number of items in the population

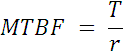

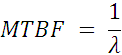

Mean Time Between Failure (MTBF)

This concept appears quite frequently in reliability literature; it applies to repairable items in which failed elements are replaced upon failure. The expression for MTBF is

where

T = total operating time

r = number of failures

The Reliability Analytics Toolkit field MTBF calculator can be used to perform this calculation.

It is important to remember that MTBF only has meaning for repairable items, and, for that case, MTBF represents exactly the same parameter as mean life (θ). More important is the fact that a constant failure rate is assumed. Thus, given the twin assumptions of replacement upon failure and constant failure rate, the reliability function is

![]() Equ 20

Equ 20

and (for this case)

Summary of Basic Concepts

References:

1. MIL-HDBK-338, Electronic Reliability Design Handbook, 15 Oct 84

2. Bazovsky, Igor, Reliability Theory and Practice

3. O’Connor, Patrick, D. T., Practical Reliability Engineering

4. Birolini, Alessandro, Reliability Engineering: Theory and Practice